Keynote: The Future Of Search #SMX was originally published on BruceClay.com, home of expert search engine optimization tips.

You’re tuned in to the BCI blog where we’re liveblogging SMX West all week. This is the show’s opening keynote, a demo-heavy presentation by Behshad Behzadi (@BehshadBehzadi), principal engineer at Google Zurich.

Danny Sullivan with keynote speaker Behshad Behzadi (Photo credit: Search Engine Land)

Behzadi is the director of conversational search. Danny Sullivan says that Behzadi previously did this presentation at SMX London, and it was a mind-blowing presentation into what’s possible with conversational search. Behzadi has been at Google for 10 years, the first 7 years working on ranking, and the last 3 years working on future tech including Now on Tap.

This is where search is moving and where Google is strategically investing more. A photo of Captain Kirk is on the screen and then a video of Kirk talking to the Star Trek computer. (The audience absolutely cracks up when a non-skippable 15-second YouTube ad is played before the Star Trek clip.)

Another video clip Behzadi plays is from the movie “Her,” where we see an artificial intelligence operating system. Both of these movies imagine a future where you talk to a machine for answers and help. We’re moving into this kind of AI experience, and it won’t be 20 years in the future or 200 years. It’s the direction we’re moving toward now and, as we’ll see in his demos, we’re already pretty close.

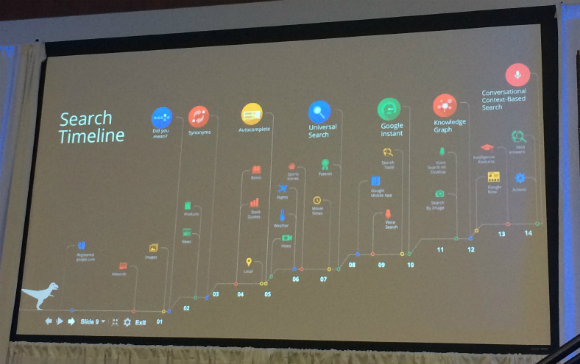

How Search Evolved to This Point

Search’s evolution over time

An early breakthrough of how search functions was understanding synonyms starting in 2002. He shows a list of queries that contain “cs” and how Google could interpret “cs” differently:

- “phd cs admission in california” —> cs = computer science (Google’s interpretation)

- “daily prices for cs equity funds” —> cs = credit suisse

- “cs bank hayfield” —> cs = citizens state

- “2007 cs world cup” —> cs = counter strike

- “bus amsterdam cs to airport” —> Google interpreted cs = central station

Another big move forward was Universal Search in 2007. The whole problem of results becomes much harder because we’re comparing apples and oranges.

Then, in 2012, the Knowledge Graph and Google understanding “things, not strings” was the next leap forward in understanding the real world. 2 billion entities, 54 billion facts, 38,000 types of entities — and growing. (Behzadi updates these numbers every time he gives a presentation.)

The World Is Changing

The world is becoming increasingly mobile. In 2015 people conducted more searches on phones and tablets than on desktop. So, when you want to build the future, you think of mobile. Besides smart phones, there are other devices that move with you and know about the context of where you are. This includes wearables like watches, and even smart cars.

In the mobile world people increasingly use speech. With new devices, speech is the easiest and sometimes the only way of input.

- The ratio of voice search is growing faster than type speech.

- The reason for the growth in voice search is that speech recognition today is actually working.

- Today, speech recognition word error rate is 8 percent.

Another thing to note is that speech search is normal. No one thinks it’s weird when you speak searches into your phone today. Therefore, people use more natural sentences instead of query language.

“What’s the weather like in Paris?” vs. “weather Paris”

In this mobile world, people find the answers to their needs in both apps and the web.

What’s the Future of Search, Then?

To build the ultimate assistant.

The ultimate assistant should understand:

- The world

- You and your world

- Your current context

Demos!

- Answers about the world

- Answers about you

- Apps

- Actions

- Contexts and conversations

- Now on Tap

Answers about the world

Such as, “Show me a list of cocktails made with vodka?”

Answers about you

Such as, “When is my next flight?” or “What is the address of my work?”

Along with searching your email, you can search your calendar for events, you can search your photos for dolphins, and the photo recognition is pretty strong.

Apps

He asks Google to play a song by title.

Actions

You can set an alarm for any time just by asking.

Contexts and conversations

From speech recognition to understanding — the first voice search he asks is, “How high is Rigi?” Google doesn’t understand the question. Then he says, “mountains in the Alps,” which Google then lists off. And then he asks, “How high is Rigi?” and now Google can answer with the height of the mountain Rigi.

Voice correction: You might ask, “Show me pictures of Wales” and you’ll get back pictures of whales. Then you say “w-a-l-e-s” and Google understands it’s a correction and shows pictures of Wales.

Now on Tap

From the moment he was in a chat and talked about a restaurant reservation, to the moment when the reservation is made, that’s two taps.

Is the ultimate assistant still science fiction? It’s becoming more and more believable. Something very similar to the experience of the Star Trek computer or “Her” operating system is almost here. Behzadi wouldn’t call those movies science fiction.

The future of search is the ultimate assistant that helps you with your daily life so you can focus on the things that matter.

Q&A

Question: In B2B, talking to your laptop is still not accepted. Can you speak to the place of this technology in B2B?

In terms of capabilities of answering and getting things done for B2B it’s the same, the technology is still the same, in the case of taking the next search based on what was searched for before.

Question: What’s the success rate of voice search on mobile vs. desktop? How do you measure that success?

That’s a hard question to answer because the types of queries are different.

Question: A lot of us and our customers have Apple products. How can you expand these Google technologies into Apple products?

Apple phones are good phones, and we would like to reach all users. Maybe 80 percent of what he showed today works on Apple phones. All the app integration is the harder part.

No comments:

Post a Comment